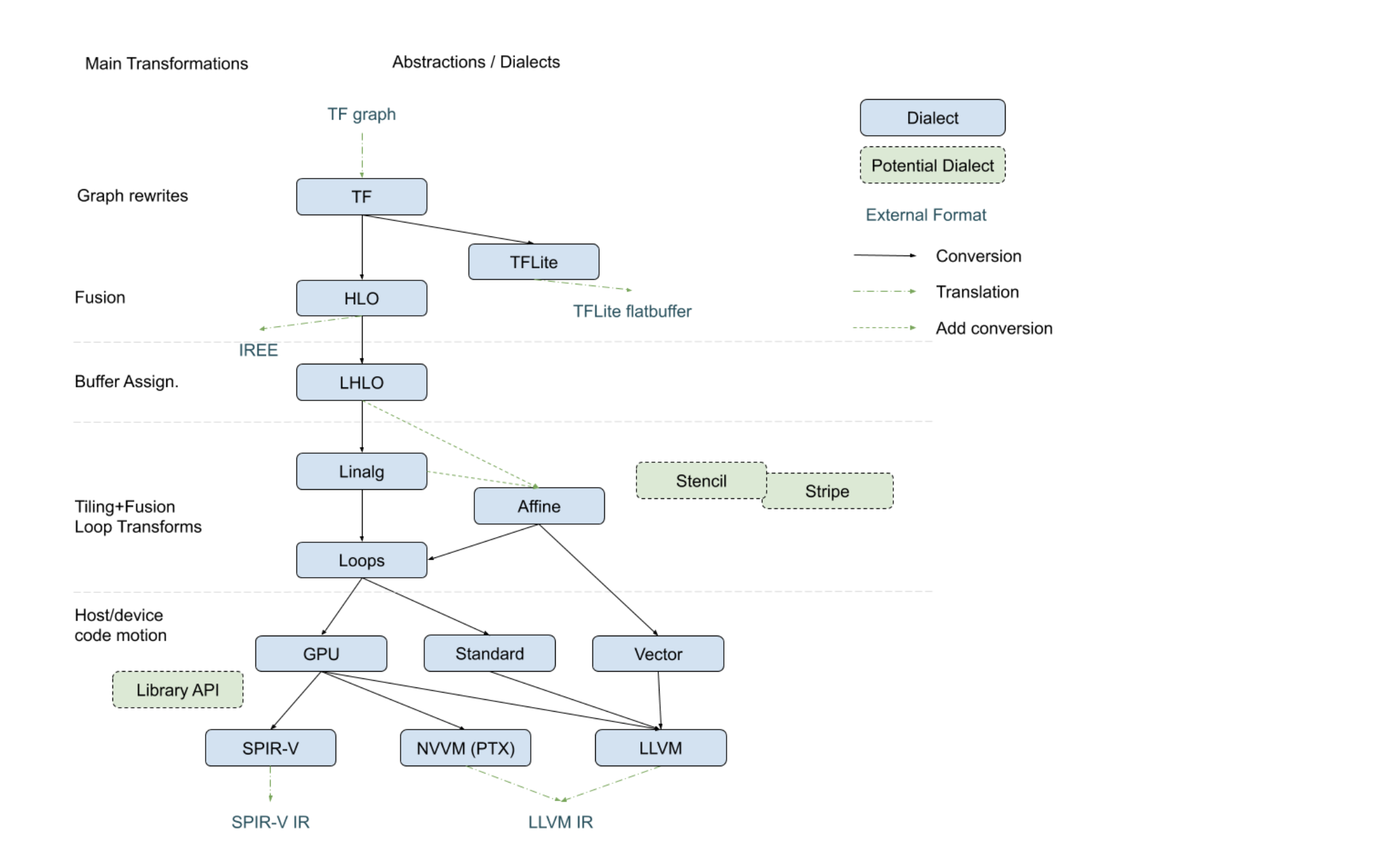

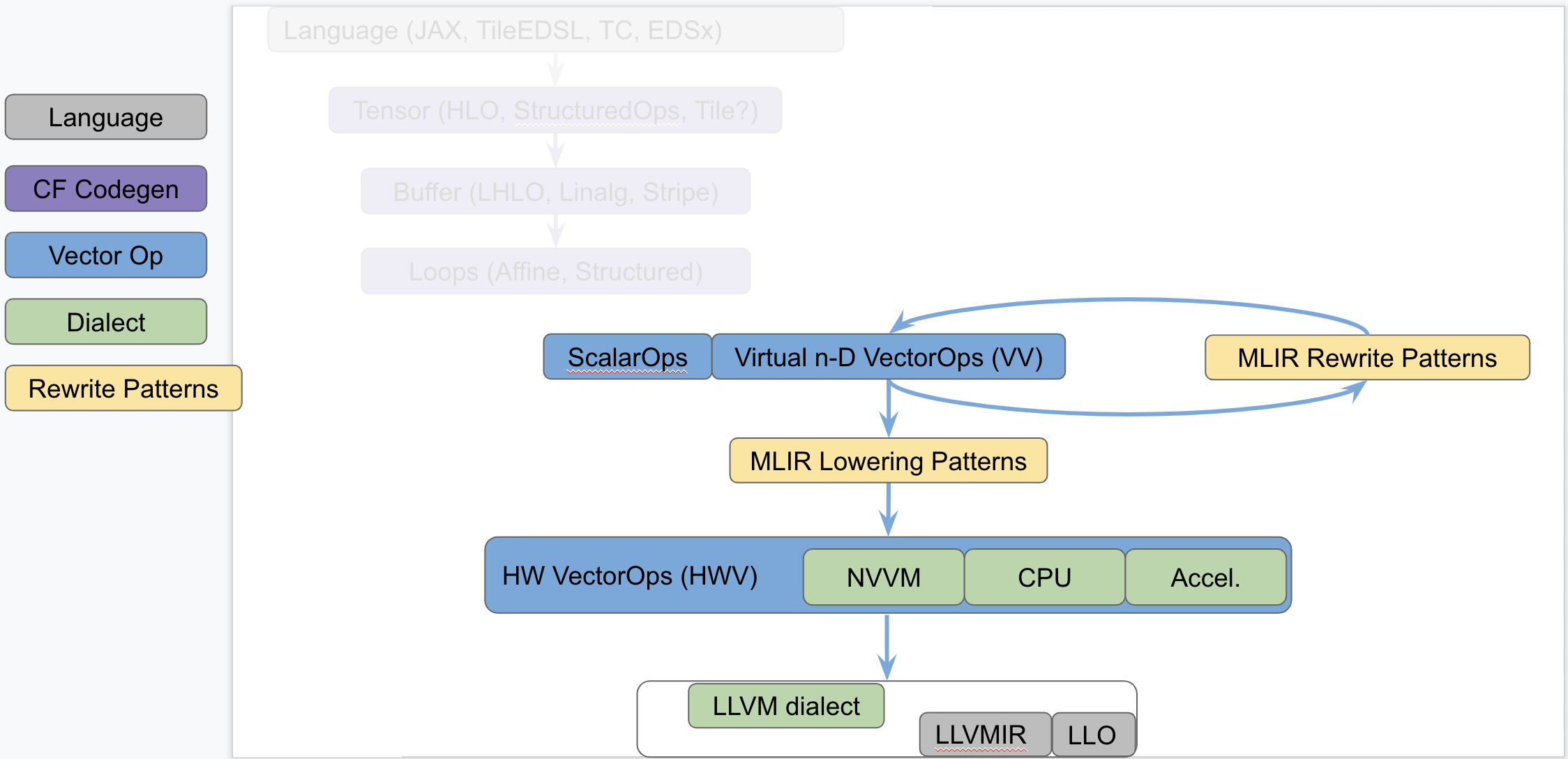

1# 'vector' Dialect 2 3[TOC] 4 5MLIR supports multi-dimensional `vector` types and custom operations on those 6types. A generic, retargetable, higher-order ``vector`` type (`n-D` with `n > 71`) is a structured type, that carries semantic information useful for 8transformations. This document discusses retargetable abstractions that exist 9in MLIR today and operate on ssa-values of type `vector` along with pattern 10rewrites and lowerings that enable targeting specific instructions on concrete 11targets. These abstractions serve to separate concerns between operations on 12`memref` (a.k.a buffers) and operations on ``vector`` values. This is not a 13new proposal but rather a textual documentation of existing MLIR components 14along with a rationale. 15 16## Positioning in the Codegen Infrastructure 17The following diagram, recently presented with the [StructuredOps 18abstractions](https://drive.google.com/corp/drive/u/0/folders/1sRAsgsd8Bvpm_IxREmZf2agsGU2KvrK-), 19captures the current codegen paths implemented in MLIR in the various existing 20lowering paths. 21 22 23The following diagram seeks to isolate `vector` dialects from the complexity 24of the codegen paths and focus on the payload-carrying ops that operate on std 25and `vector` types. This diagram is not to be taken as set in stone and 26representative of what exists today but rather illustrates the layering of 27abstractions in MLIR. 28 29 30 31This separates concerns related to (a) defining efficient operations on 32`vector` types from (b) program analyses + transformations on `memref`, loops 33and other types of structured ops (be they `HLO`, `LHLO`, `Linalg` or other ). 34Looking a bit forward in time, we can put a stake in the ground and venture 35that the higher level of `vector`-level primitives we build and target from 36codegen (or some user/language level), the simpler our task will be, the more 37complex patterns can be expressed and the better performance will be. 38 39## Components of a Generic Retargetable Vector-Level Dialect 40The existing MLIR `vector`-level dialects are related to the following 41bottom-up abstractions: 42 431. Representation in `LLVMIR` via data structures, instructions and 44intrinsics. This is referred to as the `LLVM` level. 452. Set of machine-specific operations and types that are built to translate 46almost 1-1 with the HW ISA. This is referred to as the Hardware Vector level; 47a.k.a `HWV`. For instance, we have (a) the `NVVM` dialect (for `CUDA`) with 48tensor core ops, (b) accelerator-specific dialects (internal), a potential 49(future) `CPU` dialect to capture `LLVM` intrinsics more closely and other 50dialects for specific hardware. Ideally this should be auto-generated as much 51as possible from the `LLVM` level. 523. Set of virtual, machine-agnostic, operations that are informed by costs at 53the `HWV`-level. This is referred to as the Virtual Vector level; a.k.a 54`VV`. This is the level that higher-level abstractions (codegen, automatic 55vectorization, potential vector language, ...) targets. 56 57The existing generic, retargetable, `vector`-level dialect is related to the 58following top-down rewrites and conversions: 59 601. MLIR Rewrite Patterns applied by the MLIR `PatternRewrite` infrastructure 61to progressively lower to implementations that match closer and closer to the 62`HWV`. Some patterns are "in-dialect" `VV -> VV` and some are conversions `VV 63-> HWV`. 642. `Virtual Vector -> Hardware Vector` lowering is specified as a set of MLIR 65lowering patterns that are specified manually for now. 663. `Hardware Vector -> LLVM` lowering is a mechanical process that is written 67manually at the moment and that should be automated, following the `LLVM -> 68Hardware Vector` ops generation as closely as possible. 69 70## Short Description of the Existing Infrastructure 71 72### LLVM level 73On CPU, the `n-D` `vector` type currently lowers to 74`!llvm<array<vector>>`. More concretely, `vector<4x8x128xf32>` lowers to 75`!llvm<[4 x [ 8 x [ 128 x float ]]]>`. 76There are tradeoffs involved related to how one can access subvectors and how 77one uses `llvm.extractelement`, `llvm.insertelement` and 78`llvm.shufflevector`. A [deeper dive section](#DeeperDive) discusses the 79current lowering choices and tradeoffs. 80 81### Hardware Vector Ops 82Hardware Vector Ops are implemented as one dialect per target. 83For internal hardware, we are auto-generating the specific HW dialects. 84For `GPU`, the `NVVM` dialect adds operations such as `mma.sync`, `shfl` and 85tests. 86For `CPU` things are somewhat in-flight because the abstraction is close to 87`LLVMIR`. The jury is still out on whether a generic `CPU` dialect is 88concretely needed, but it seems reasonable to have the same levels of 89abstraction for all targets and perform cost-based lowering decisions in MLIR 90even for `LLVM`. 91Specialized `CPU` dialects that would capture specific features not well 92captured by LLVM peephole optimizations of on different types that core MLIR 93supports (e.g. Scalable Vectors) are welcome future extensions. 94 95### Virtual Vector Ops 96Some existing Standard and Vector Dialect on `n-D` `vector` types comprise: 97``` 98%2 = std.addf %0, %1 : vector<3x7x8xf32> // -> vector<3x7x8xf32> 99%2 = std.mulf %0, %1 : vector<3x7x8xf32> // -> vector<3x7x8xf32> 100%2 = std.splat %1 : vector<3x7x8xf32> // -> vector<3x7x8xf32> 101 102%1 = vector.extract %0[1]: vector<3x7x8xf32> // -> vector<7x8xf32> 103%1 = vector.extract %0[1, 5]: vector<3x7x8xf32> // -> vector<8xf32> 104%2 = vector.outerproduct %0, %1: vector<4xf32>, vector<8xf32> // -> vector<4x8xf32> 105%3 = vector.outerproduct %0, %1, %2: vector<4xf32>, vector<8xf32> // fma when adding %2 106%3 = vector.strided_slice %0 {offsets = [2, 2], sizes = [2, 2], strides = [1, 1]}: 107 vector<4x8x16xf32> // Returns a slice of type vector<2x2x16xf32> 108 109%2 = vector.transfer_read %A[%0, %1] 110 {permutation_map = (d0, d1) -> (d0)}: memref<7x?xf32>, vector<4xf32> 111 112vector.transfer_write %f1, %A[%i0, %i1, %i2, %i3] 113 {permutation_map = (d0, d1, d2, d3) -> (d3, d1, d0)} : 114 vector<5x4x3xf32>, memref<?x?x?x?xf32> 115``` 116 117The list of Vector is currently undergoing evolutions and is best kept 118track of by following the evolution of the 119[VectorOps.td](https://github.com/llvm/llvm-project/blob/master/mlir/include/mlir/Dialect/Vector/VectorOps.td) 120ODS file (markdown documentation is automatically generated locally when 121building and populates the [Vector 122doc](https://github.com/llvm/llvm-project/blob/master/mlir/docs/Dialects/Vector.md)). Recent 123extensions are driven by concrete use cases of interest. A notable such use 124case is the `vector.contract` op which applies principles of the StructuredOps 125abstraction to `vector` types. 126 127### Virtual Vector Rewrite Patterns 128 129The following rewrite patterns exist at the `VV->VV` level: 130 1311. The now retired `MaterializeVector` pass used to legalize ops on a 132coarse-grained virtual `vector` to a finer-grained virtual `vector` by 133unrolling. This has been rewritten as a retargetable unroll-and-jam pattern on 134`vector` ops and `vector` types. 1352. The lowering of `vector_transfer` ops legalizes `vector` load/store ops to 136permuted loops over scalar load/stores. This should evolve to loops over 137`vector` load/stores + `mask` operations as they become available `vector` ops 138at the `VV` level. 139 140The general direction is to add more Virtual Vector level ops and implement 141more useful `VV -> VV` rewrites as composable patterns that the PatternRewrite 142infrastructure can apply iteratively. 143 144### Virtual Vector to Hardware Vector Lowering 145For now, `VV -> HWV` are specified in C++ (see for instance the 146[SplatOpLowering for n-D 147vectors](https://github.com/tensorflow/mlir/commit/0a0c4867c6a6fcb0a2f17ef26a791c1d551fe33d) 148or the [VectorOuterProductOp 149lowering](https://github.com/tensorflow/mlir/commit/957b1ca9680b4aacabb3a480fbc4ebd2506334b8)). 150 151Simple [conversion 152tests](https://github.com/llvm/llvm-project/blob/master/mlir/test/Conversion/VectorToLLVM/vector-to-llvm.mlir) 153are available for the `LLVM` target starting from the Virtual Vector Level. 154 155## Rationale 156### Hardware as `vector` Machines of Minimum Granularity 157 158Higher-dimensional `vector`s are ubiquitous in modern HPC hardware. One way to 159think about Generic Retargetable `vector`-Level Dialect is that it operates on 160`vector` types that are a multiples of a "good" `vector` size so the HW can 161efficiently implement a set of high-level primitives 162(e.g. `vector<8x8x8x16xf32>` when HW `vector` size is say `vector<4x8xf32>`). 163 164Some notable `vector` sizes of interest include: 165 1661. CPU: `vector<HW_vector_size * k>`, `vector<core_count * k’ x 167HW_vector_size * k>` and `vector<socket_count x core_count * k’ x 168HW_vector_size * k>` 1692. GPU: `vector<warp_size * k>`, `vector<warp_size * k x float4>` and 170`vector<warp_size * k x 4 x 4 x 4>` for tensor_core sizes, 1713. Other accelerators: n-D `vector` as first-class citizens in the HW. 172 173Depending on the target, ops on sizes that are not multiples of the HW 174`vector` size may either produce slow code (e.g. by going through `LLVM` 175legalization) or may not legalize at all (e.g. some unsupported accelerator X 176combination of ops and types). 177 178### Transformations Problems Avoided 179A `vector<16x32x64xf32>` virtual `vector` is a coarse-grained type that can be 180“unrolled” to HW-specific sizes. The multi-dimensional unrolling factors are 181carried in the IR by the `vector` type. After unrolling, traditional 182instruction-level scheduling can be run. 183 184The following key transformations (along with the supporting analyses and 185structural constraints) are completely avoided by operating on a ``vector`` 186`ssa-value` abstraction: 187 1881. Loop unroll and unroll-and-jam. 1892. Loop and load-store restructuring for register reuse. 1903. Load to store forwarding and Mem2reg. 1914. Coarsening (raising) from finer-grained `vector` form. 192 193Note that “unrolling” in the context of `vector`s corresponds to partial loop 194unroll-and-jam and not full unrolling. As a consequence this is expected to 195compose with SW pipelining where applicable and does not result in ICache blow 196up. 197 198### The Big Out-Of-Scope Piece: Automatic Vectorization 199One important piece not discussed here is automatic vectorization 200(automatically raising from scalar to n-D `vector` ops and types). The TL;DR 201is that when the first "super-vectorization" prototype was implemented, MLIR 202was nowhere near as mature as it is today. As we continue building more 203abstractions in `VV -> HWV`, there is an opportunity to revisit vectorization 204in MLIR. 205 206Since this topic touches on codegen abstractions, it is technically out of the 207scope of this survey document but there is a lot to discuss in light of 208structured op type representations and how a vectorization transformation can 209be reused across dialects. In particular, MLIR allows the definition of 210dialects at arbitrary levels of granularity and lends itself favorably to 211progressive lowering. The argument can be made that automatic vectorization on 212a loops + ops abstraction is akin to raising structural information that has 213been lost. Instead, it is possible to revisit vectorization as simple pattern 214rewrites, provided the IR is in a suitable form. For instance, vectorizing a 215`linalg.generic` op whose semantics match a `matmul` can be done [quite easily 216with a 217pattern](https://github.com/tensorflow/mlir/commit/bff722d6b59ab99b998f0c2b9fccd0267d9f93b5). In 218fact this pattern is trivial to generalize to any type of contraction when 219targeting the `vector.contract` op, as well as to any field (`+/*`, `min/+`, 220`max/+`, `or/and`, `logsumexp/+` ...) . In other words, by operating on a 221higher level of generic abstractions than affine loops, non-trivial 222transformations become significantly simpler and composable at a finer 223granularity. 224 225Irrespective of the existence of an auto-vectorizer, one can build a notional 226vector language based on the VectorOps dialect and build end-to-end models 227with expressing `vector`s in the IR directly and simple 228pattern-rewrites. [EDSC](https://github.com/llvm/llvm-project/blob/master/mlir/docs/EDSC.md)s 229provide a simple way of driving such a notional language directly in C++. 230 231## Bikeshed Naming Discussion 232There are arguments against naming an n-D level of abstraction `vector` 233because most people associate it with 1-D `vector`s. On the other hand, 234`vector`s are first-class n-D values in MLIR. 235The alternative name Tile has been proposed, which conveys higher-D 236meaning. But it also is one of the most overloaded terms in compilers and 237hardware. 238For now, we generally use the `n-D` `vector` name and are open to better 239suggestions. 240 241## DeeperDive 242 243This section describes the tradeoffs involved in lowering the MLIR n-D vector 244type and operations on it to LLVM-IR. Putting aside the [LLVM 245Matrix](http://lists.llvm.org/pipermail/llvm-dev/2018-October/126871.html) 246proposal for now, this assumes LLVM only has built-in support for 1-D 247vector. The relationship with the LLVM Matrix proposal is discussed at the end 248of this document. 249 250MLIR does not currently support dynamic vector sizes (i.e. SVE style) so the 251discussion is limited to static rank and static vector sizes 252(e.g. `vector<4x8x16x32xf32>`). This section discusses operations on vectors 253in LLVM and MLIR. 254 255LLVM instructions are prefixed by the `llvm.` dialect prefix 256(e.g. `llvm.insertvalue`). Such ops operate exclusively on 1-D vectors and 257aggregates following the [LLVM LangRef](https://llvm.org/docs/LangRef.html). 258MLIR operations are prefixed by the `vector.` dialect prefix 259(e.g. `vector.insertelement`). Such ops operate exclusively on MLIR `n-D` 260`vector` types. 261 262### Alternatives For Lowering an n-D Vector Type to LLVM 263Consider a vector of rank n with static sizes `{s_0, ... s_{n-1}}` (i.e. an 264MLIR `vector<s_0x...s_{n-1}xf32>`). Lowering such an `n-D` MLIR vector type to 265an LLVM descriptor can be done by either: 266 2671. Flattening to a `1-D` vector: `!llvm<"(s_0*...*s_{n-1})xfloat">` in the 268MLIR LLVM dialect. 2692. Nested aggregate type of `1-D` vector: 270`!llvm<"[s_0x[s_1x[...<s_{n-1}xfloat>]]]">` in the MLIR LLVM dialect. 2713. A mix of both. 272 273There are multiple tradeoffs involved in choosing one or the other that we 274discuss. It is important to note that “a mix of both” immediately reduces to 275“nested aggregate type of 1-D vector” with a `vector.cast %0: 276vector<4x8x16x32xf32> to vector<4x4096xf32>` operation, that flattens the most 277"k" minor dimensions. 278 279### Constraints Inherited from LLVM (see LangRef) 280The first constraint was already mentioned: LLVM only supports `1-D` `vector` 281types natively. 282Additional constraints are related to the difference in LLVM between vector 283and aggregate types: 284``` 285 “Aggregate Types are a subset of derived types that can contain multiple 286 member types. Arrays and structs are aggregate types. Vectors are not 287 considered to be aggregate types.”. 288``` 289 290This distinction is also reflected in some of the operations. For `1-D` 291vectors, the operations `llvm.extractelement`, `llvm.insertelement`, and 292`llvm.shufflevector` apply, with direct support for dynamic indices. For `n-D` 293vectors with `n>1`, and thus aggregate types at LLVM level, the more 294restrictive operations `llvm.extractvalue` and `llvm.insertvalue` apply, which 295only accept static indices. There is no direct shuffling support for aggregate 296types. 297 298The next sentence illustrates a recurrent tradeoff, also found in MLIR, 299between “value types” (subject to SSA use-def chains) and “memory types” 300(subject to aliasing and side-effects): 301``` 302“Structures in memory are accessed using ‘load’ and ‘store’ by getting a 303pointer to a field with the llvm.getelementptr instruction. Structures in 304registers are accessed using the llvm.extractvalue and llvm.insertvalue 305instructions.” 306``` 307 308When transposing this to MLIR, `llvm.getelementptr` works on pointers to `n-D` 309vectors in memory. For `n-D`, vectors values that live in registers we can use 310`vector.extract` and `vector.insert` which do not accept dynamic indices. Note 311that this is consistent with hardware considerations as discussed below. 312 313An alternative is to use an LLVM `1-D` `vector` type for which one can use 314`llvm.extractelement`, `llvm.insertelement` and `llvm.shufflevector`. These 315operations accept dynamic indices. The implication is that one has to use a 316flattened lowering of an MLIR n-D vector to an LLVM 1-D vector. 317 318There are multiple tradeoffs involved that mix implications on the programming 319model, execution on actual HW and what is visible or hidden from codegen. They 320are discussed in the following sections. 321 322### Nested Aggregate 323Pros: 324 3251. Natural encoding n-D vector -> (n-1)-D aggregate over 1-D vector. 3262. No need for linearization / delinearization logic inserted everywhere. 3273. `llvm.insertvalue`, `llvm.extractvalue` of `(n-k)-D` aggregate is natural. 3284. `llvm.insertelement`, `llvm.extractelement`, `llvm.shufflevector` over 329`1-D` vector type is natural. 330 331Cons: 332 3331. `llvm.insertvalue` / `llvm.extractvalue` does not accept dynamic indices 334but only static ones. 3352. Dynamic indexing on the non-most-minor dimension requires roundtrips to 336memory. 3373. Special intrinsics and native instructions in LLVM operate on `1-D` 338vectors. This is not expected to be a practical limitation thanks to a 339`vector.cast %0: vector<4x8x16x32xf32> to vector<4x4096xf32>` operation, that 340flattens the most minor dimensions (see the bigger picture in implications on 341codegen). 342 343### Flattened 1-D Vector Type 344 345Pros: 346 3471. `insertelement` / `extractelement` / `shufflevector` with dynamic indexing 348is possible over the whole lowered `n-D` vector type. 3492. Supports special intrinsics and native operations. 350 351Cons: 3521. Requires linearization/delinearization logic everywhere, translations are 353complex. 3542. Hides away the real HW structure behind dynamic indexing: at the end of the 355day, HW vector sizes are generally fixed and multiple vectors will be needed 356to hold a vector that is larger than the HW. 3573. Unlikely peephole optimizations will result in good code: arbitrary dynamic 358accesses, especially at HW vector boundaries unlikely to result in regular 359patterns. 360 361### Discussion 362#### HW Vectors and Implications on the SW and the Programming Model 363As of today, the LLVM model only support `1-D` vector types. This is 364unsurprising because historically, the vast majority of HW only supports `1-D` 365vector registers. We note that multiple HW vendors are in the process of 366evolving to higher-dimensional physical vectors. 367 368In the following discussion, let's assume the HW vector size is `1-D` and the 369SW vector size is `n-D`, with `n >= 1`. The same discussion would apply with 370`2-D` HW `vector` size and `n >= 2`. In this context, most HW exhibit a vector 371register file. The number of such vectors is fixed. 372Depending on the rank and sizes of the SW vector abstraction and the HW vector 373sizes and number of registers, an `n-D` SW vector type may be materialized by 374a mix of multiple `1-D` HW vector registers + memory locations at a given 375point in time. 376 377The implication of the physical HW constraints on the programming model are 378that one cannot index dynamically across hardware registers: a register file 379can generally not be indexed dynamically. This is because the register number 380is fixed and one either needs to unroll explicitly to obtain fixed register 381numbers or go through memory. This is a constraint familiar to CUDA 382programmers: when declaring a `private float a[4]`; and subsequently indexing 383with a *dynamic* value results in so-called **local memory** usage 384(i.e. roundtripping to memory). 385 386#### Implication on codegen 387MLIR `n-D` vector types are currently represented as `(n-1)-D` arrays of `1-D` 388vectors when lowered to LLVM. 389This introduces the consequences on static vs dynamic indexing discussed 390previously: `extractelement`, `insertelement` and `shufflevector` on `n-D` 391vectors in MLIR only support static indices. Dynamic indices are only 392supported on the most minor `1-D` vector but not the outer `(n-1)-D`. 393For other cases, explicit load / stores are required. 394 395The implications on codegen are as follows: 396 3971. Loops around `vector` values are indirect addressing of vector values, they 398must operate on explicit load / store operations over `n-D` vector types. 3992. Once an `n-D` `vector` type is loaded into an SSA value (that may or may 400not live in `n` registers, with or without spilling, when eventually lowered), 401it may be unrolled to smaller `k-D` `vector` types and operations that 402correspond to the HW. This level of MLIR codegen is related to register 403allocation and spilling that occur much later in the LLVM pipeline. 4043. HW may support >1-D vectors with intrinsics for indirect addressing within 405these vectors. These can be targeted thanks to explicit `vector_cast` 406operations from MLIR `k-D` vector types and operations to LLVM `1-D` vectors + 407intrinsics. 408 409Alternatively, we argue that directly lowering to a linearized abstraction 410hides away the codegen complexities related to memory accesses by giving a 411false impression of magical dynamic indexing across registers. Instead we 412prefer to make those very explicit in MLIR and allow codegen to explore 413tradeoffs. 414Different HW will require different tradeoffs in the sizes involved in steps 4151., 2. and 3. 416 417Decisions made at the MLIR level will have implications at a much later stage 418in LLVM (after register allocation). We do not envision to expose concerns 419related to modeling of register allocation and spilling to MLIR 420explicitly. Instead, each target will expose a set of "good" target operations 421and `n-D` vector types, associated with costs that `PatterRewriters` at the 422MLIR level will be able to target. Such costs at the MLIR level will be 423abstract and used for ranking, not for accurate performance modeling. In the 424future such costs will be learned. 425 426#### Implication on Lowering to Accelerators 427To target accelerators that support higher dimensional vectors natively, we 428can start from either `1-D` or `n-D` vectors in MLIR and use `vector.cast` to 429flatten the most minor dimensions to `1-D` `vector<Kxf32>` where `K` is an 430appropriate constant. Then, the existing lowering to LLVM-IR immediately 431applies, with extensions for accelerator-specific intrinsics. 432 433It is the role of an Accelerator-specific vector dialect (see codegen flow in 434the figure above) to lower the `vector.cast`. Accelerator -> LLVM lowering 435would then consist of a bunch of `Accelerator -> Accelerator` rewrites to 436perform the casts composed with `Accelerator -> LLVM` conversions + intrinsics 437that operate on `1-D` `vector<Kxf32>`. 438 439Some of those rewrites may need extra handling, especially if a reduction is 440involved. For example, `vector.cast %0: vector<K1x...xKnxf32> to 441vector<Kxf32>` when `K != K1 * … * Kn` and some arbitrary irregular 442`vector.cast %0: vector<4x4x17xf32> to vector<Kxf32>` may introduce masking 443and intra-vector shuffling that may not be worthwhile or even feasible, 444i.e. infinite cost. 445 446However `vector.cast %0: vector<K1x...xKnxf32> to vector<Kxf32>` when `K = 447K1 * … * Kn` should be close to a noop. 448 449As we start building accelerator-specific abstractions, we hope to achieve 450retargetable codegen: the same infra is used for CPU, GPU and accelerators 451with extra MLIR patterns and costs. 452 453#### Implication on calling external functions that operate on vectors 454It is possible (likely) that we additionally need to linearize when calling an 455external function. 456 457### Relationship to LLVM matrix type proposal. 458The LLVM matrix proposal was formulated 1 year ago but seemed to be somewhat 459stalled until recently. In its current form, it is limited to 2-D matrix types 460and operations are implemented with LLVM intrinsics. 461In contrast, MLIR sits at a higher level of abstraction and allows the 462lowering of generic operations on generic n-D vector types from MLIR to 463aggregates of 1-D LLVM vectors. 464In the future, it could make sense to lower to the LLVM matrix abstraction 465also for CPU even though MLIR will continue needing higher level abstractions. 466 467On the other hand, one should note that as MLIR is moving to LLVM, this 468document could become the unifying abstraction that people should target for 469>1-D vectors and the LLVM matrix proposal can be viewed as a subset of this 470work. 471 472### Conclusion 473The flattened 1-D vector design in the LLVM matrix proposal is good in a 474HW-specific world with special intrinsics. This is a good abstraction for 475register allocation, Instruction-Level-Parallelism and 476SoftWare-Pipelining/Modulo Scheduling optimizations at the register level. 477However MLIR codegen operates at a higher level of abstraction where we want 478to target operations on coarser-grained vectors than the HW size and on which 479unroll-and-jam is applied and patterns across multiple HW vectors can be 480matched. 481 482This makes “nested aggregate type of 1-D vector” an appealing abstraction for 483lowering from MLIR because: 484 4851. it does not hide complexity related to the buffer vs value semantics and 486the memory subsystem and 4872. it does not rely on LLVM to magically make all the things work from a too 488low-level abstraction. 489 490The use of special intrinsics in a `1-D` LLVM world is still available thanks 491to an explicit `vector.cast` op. 492 493## Operations 494 495[include "Dialects/VectorOps.md"] 496